Container images simplified with Ko

2022年10月10日

0 分で読めますIn a previous article, I wrote about how — and why — you might want to use the Google Open Source group’s Jib tool to build your Java application container images. Jib builds slim, JVM-based, OCI-compliant images that follow best practice guidelines without the need for a container runtime like Docker, and it removes the need to write and manage Dockerfiles. What if you are building Go applications, though? Well, there is another open source tool for Go that works similarly called Ko.

Note: During the drafting of this article, the Ko project was migrated from a Google owned GitHub repository to it's own top-level organization, "ko-build". We have updated verbiage in this post to reflect this but references to "Google Ko" may still be found online and in module names for a while, be assured that this is the same project. Congratulations to the Ko project for the success that merited this migration!

In this article, we’ll look at using Ko to build container images without Dockerfiles, SBOMs, and integrating with Kubernetes.

What is Ko?

Ko is a single binary, command line tool that is designed to be used in your development process in place of where you run the go compiler today. In addition to compiling your application, it will also generate an ultra slimmed-down container image that has your application installed in it. Like Jib, Ko will push the image to a registry or drop it into your local Docker image cache, depending on how you configure and/or run it.

Ko also has a few additional tricks up its sleeve related to software bill of materials (SBOM) construction as well as Kubernetes integration to help make iterative development and deployment processes super simple.

What problems is Ko trying to solve?

Many programmers, regardless of the language they work with, are new to container construction and often have a lot of questions about image building such as:

Which base image should I use, and is it compliant with my organization’s policies?

How do I best combine commands to minimize layer bloat?

How much of my app should I copy into the image to run my application?

What tooling do I need to learn to build the image? (Docker? Buildah? BuildKit?)

Are there standard annotations I need to include per my organization’s requirements?

Application architects and leads also want to make governance and standards implementation easy for their teams to follow, but maintaining uniform practices can be challenging when every team has unique and organically crafted Dockerfile patterns.

Security teams also care greatly about what goes into an image and the tooling used to construct it. For example, the practice of exposing the Docker engine — or the host machine’s docker.sock — to a CI build node can give build environments elevated access levels on those nodes.

“[The Docker] daemon… has a lot more capabilities beyond building and interacting with registries. Without additional security tooling, any user who can trigger a docker build on this machine can also perform a docker run to execute any command they like on the machine… Not only can they run any command they like, but also, if they use this privilege to perform a malicious action, it will be hard to track down who was responsible.”

— Container Security by Liz Rice, Chapter 6

On the other hand, introducing new build tools can be complex and increase the learning curve for those in charge of the build systems. Ko aims to address each of these areas while providing a superior experience to the developers using it.

Image building with and without Ko

To describe how Ko addresses the above-listed challenges, let's first look at an example of how it compares to existing Go + Docker build steps.

Building the image without Ko

For this example, we will build the classic Go tutorial application, the “Hello World” web app.

1. In an empty directory, create the following as hello.go:

package main

import (

"fmt"

"net/http"

)

func main() {

http.HandleFunc("/", HelloWebServer)

http.ListenAndServe(":8080", nil)

}

func HelloWebServer(w http.ResponseWriter, r *http.Request) {

fmt.Fprintf(w, "Hello, %s!", r.URL.Path[1:])

}2. We will go ahead and build the binary as part of our Dockerfile as that is a common pattern:

FROM golang AS build

WORKDIR /go/src

COPY . .

RUN GOOS=linux go build -ldflags "-linkmode external -extldflags -static" -a hello.go

FROM gcr.io/distroless/static:nonroot

USER nonroot:nonroot

COPY --from=build /go/src/hello .

EXPOSE 8080

CMD ["./hello"]This is a multi-stage build, with the first stage named build, based on the golang official image. In this stage, we copy the content of our app (which is simply the hello.go file at the moment) to the image under /go/src and simply run the go build tool with the parameters needed to produce a static binary.

In the second stage, we start from the Google Distroless project’s static:nonroot base image, set the default user to nonroot, copy the hello binary from the build stage into the top-level directory, set some metadata about the port we want to expose, and define that ./hello gets run by default at container startup.

Why two stages?

It is a common practice to do the build of your application in the Dockerfile as this guarantees that the right version of your compiler gets used both on a developer’s workstation and in any automated builds. A common error, though, is to deploy the same image that the build happened in. This is a security risk because you end up including not only extraneous tooling like the compiler, but also the source code of your application in that deployed image. If an attacker is able to exploit a vulnerability or get a copy of your image, they will have a lot of information and tools at their disposal to expand their attack. By starting from the distroless/static:nonroot base image, we have an extremely minimal filesystem with a non-root default user.

3. With our Dockerfile sorted out, we now can use the docker build command to construct a locally cached image and give it a tag:

$ docker build -t localhost:5000/hello-go:1.2.3 .

[+] Building 5.6s (12/12) FINISHED

=> [internal] load build definition from Dockerfile

=> => transferring dockerfile: 298B

=> [internal] load .dockerignore

=> => transferring context: 73B

=> [internal] load metadata for gcr.io/distroless/static:nonroot

=> [internal] load metadata for docker.io/library/golang:latest

=> [stage-1 1/2] FROM gcr.io/distroless/static:nonroot

=> CACHED [build 1/4] FROM docker.io/library/golang

=> [internal] load build context

=> => transferring context: 5.15kB

=> [build 2/4] WORKDIR /go/src

=> [build 3/4] COPY . .

=> [build 4/4] RUN GOOS=linux go build -ldflags "-linkmode external -extldflags -static" -a hello.go

=> [stage-1 2/2] COPY --from=build /go/src/hello .

=> exporting to image

=> => exporting layers

=> => writing image sha256:eb9735e61e1dec63bd557dd5c61d8789733f2f4456a0d01687816bd0d135a7bc

=> => naming to localhost:5000/hello-go:1.2.3

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

localhost:5000/hello-go 1.2.3 eb9735e61e1d 11 minutes ago 9.23MBNote: Your output may look a little different depending on which version of Docker you are running and if you have BuildKit enhancements enabled.

4.For others to use this image, we need to push it to a registry repository. In this example, I’m running a local repository on my workstation at port 5000 via the registry:2 image from DockerHub. (This is why I tagged the image with the localhost:5000/ prefix.)

Pushing to a registry with Docker is pretty straightforward using the docker push command:

$ docker push localhost:5000/hello-go:1.2.3

The push refers to repository [localhost:5000/hello-go]

0ba424468cb9: Pushed

ca623f32e759: Pushed

1.2.3: digest: sha256:ddcabff499d90fdf6850ef0b7addb33db7def…

Note: If this were a managed registry, I would have needed to authenticate to it with docker login first.

5. Finally, to run our image, we could use docker run or an appropriate kubectl deployment. For this simplicity’s sake, we’ll do the former:

$ docker run --rm -d -p 8080:8080 localhost:5000/hello-go:1.2.3

Unable to find image 'localhost:5000/hello-go:1.2.3' locally

1.2.3: Pulling from hello-go

45e68f4d0d8c: Already exists

ce1a03145a01: Already exists

Digest: sha256:ddcabff499d90fdf6850ef0b7addb33db7defe4669f9af1079894e84ad407199

Status: Downloaded newer image for localhost:5000/hello-go:1.2.3

2ef3c3dc0363ad10e9bf21baa7e78c63ca4df904bcba1fdab3af7732fce3857dand we can test the app with a simple curl command:

$ curl http://localhost:8080/Patch

Hello, Patch!

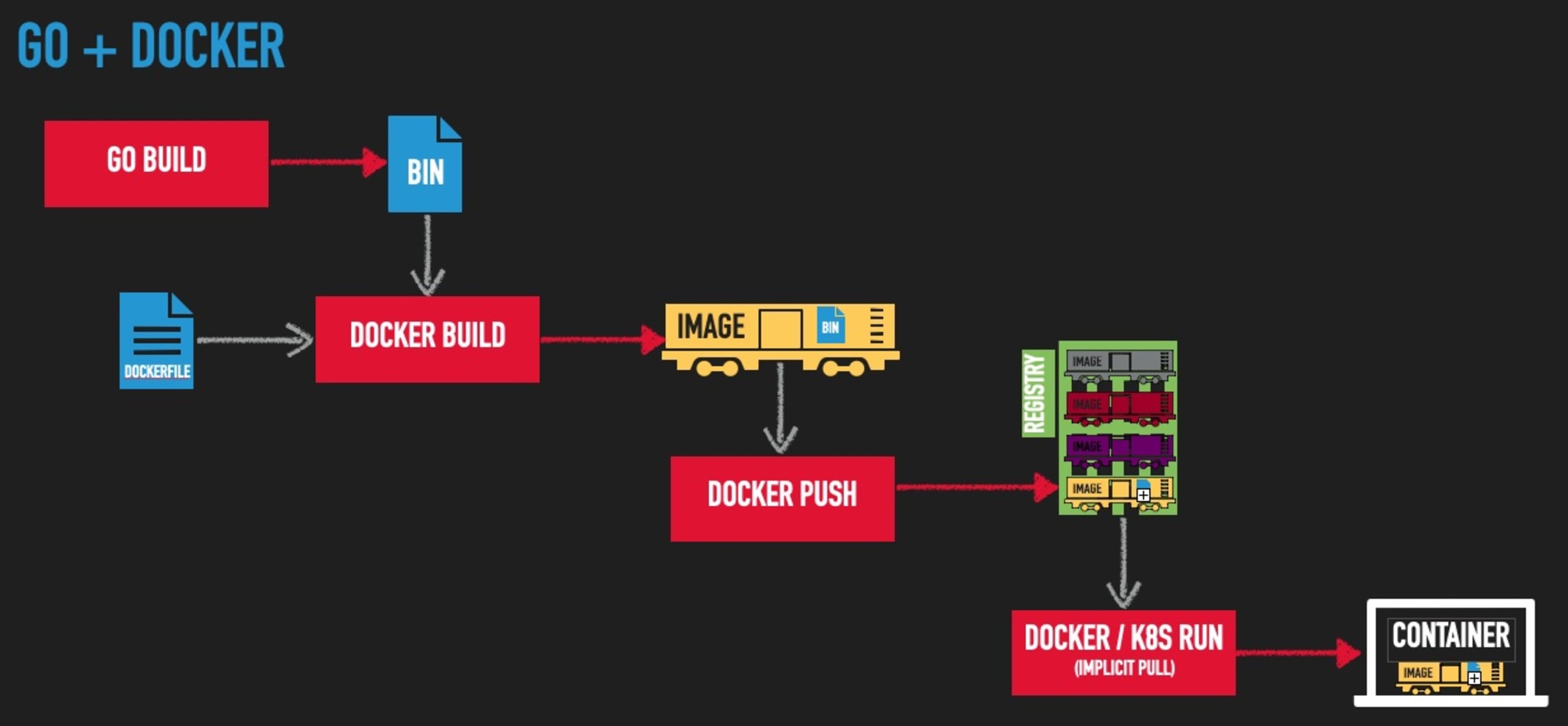

If we were to visualize the steps we just performed, it might look something like this:

Now let’s rewind back to the beginning and see how this would work with Ko.

Building the image with Ko

1.We’ll start with the same hello.go file in an empty directory:

package main

import (

"fmt"

"net/http"

)

func main() {

http.HandleFunc("/", HelloWebServer)

http.ListenAndServe(":8080", nil)

}

func HelloWebServer(w http.ResponseWriter, r *http.Request) {

fmt.Fprintf(w, "Hello, %s!", r.URL.Path[1:])

}

2.Next, we set our image registry into an environmental variable and run ko build:

$ export KO_DOCKER_REPO=localhost:5000

$ ko build hello.go

2022/09/19 14:12:37 No matching credentials were found, falling back on anonymous

2022/09/19 14:12:40 Using base gcr.io/distroless/static:nonroot@sha256:2a9e2b4fa771d31fe3346a873be845bfc2159695b9f90ca08e950497006ccc2e for hello.go

2022/09/19 14:12:40 Building hello.go for linux/amd64

2022/09/19 14:12:44 Publishing localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:latest

2022/09/19 14:12:44 existing blob: sha256:2952e4f69ebf4bea5cc557f73626f95649cb546424fd998481ba690a08d9db7f

2022/09/19 14:12:44 existing blob: sha256:a706af0bb599ee120bd57c0e6abca55f66fd714f9e74706d9c97a583fc79d37e

2022/09/19 14:12:44 localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:sha256-3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c.sbom: digest: sha256:7d444debc3cd2d5545e88606dc529fa7ed90b1bb581ff8ac30b0f475b68d4ec0 size: 367

2022/09/19 14:12:44 Published SBOM localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:sha256-3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c.sbom

2022/09/19 14:12:44 existing blob: sha256:5ec5232d47ab0ad088792a191b672fe6ec27db63b19daebd7322ad64a2cd8676

2022/09/19 14:12:44 existing blob: sha256:250c06f7c38e52dc77e5c7586c3e40280dc7ff9bb9007c396e06d96736cf8542

2022/09/19 14:12:45 pushed blob: sha256:2d23903e55394a021ba4936cfad8ccbec6998413164416fcae4f6bf888665fce

2022/09/19 14:12:45 pushed blob: sha256:1cd0595314a53d179ddaf68761c9f40c4d9d1bcd3f692d1c005938dac2993db6

2022/09/19 14:12:45 localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:latest: digest: sha256:3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c size: 750

2022/09/19 14:12:45 Published localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7@sha256:3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c

localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7@sha256:3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c

That command:

compiled our application into a static binary

put it into a well-formed image, and

pushed it to my registry.

No Dockerfile or container runtime engine was required to do any of this. Additionally, you’ll notice references in the output about an SBOM being created and published; we’ll come back to that later.

Note:The image tag here contains an md5 hash that, by default, is the import path of your go application. Since we don’t have a full Go module in this example, that hash is simply taken from hello.go. See the Ko documentation for more information on tags.

3. Now we will run the image via docker run:

$ docker run --rm -d -p 8080:8080 localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:latest

Unable to find image 'localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:latest' locally

latest: Pulling from hello.go-7a204cfb24536a350234d9132276cae7

1cd0595314a5: Pull complete

250c06f7c38e: Pull complete

5ec5232d47ab: Pull complete

Digest: sha256:3925979ac92afb8cb89fc80438097873daf067195e4d0b9d2fd6f55d6201355c

Status: Downloaded newer image for localhost:5000/hello.go-7a204cfb24536a350234d9132276cae7:latest

WARNING: The requested image's platform (linux/amd64) does not match the detected host platform (linux/arm64/v8) and no specific platform was requested

70877dcd53d23c66873e8e1e5a2092b46740e699b214a545e386113e5e098d7c... and test with curl:

curl http://localhost:8080/ko

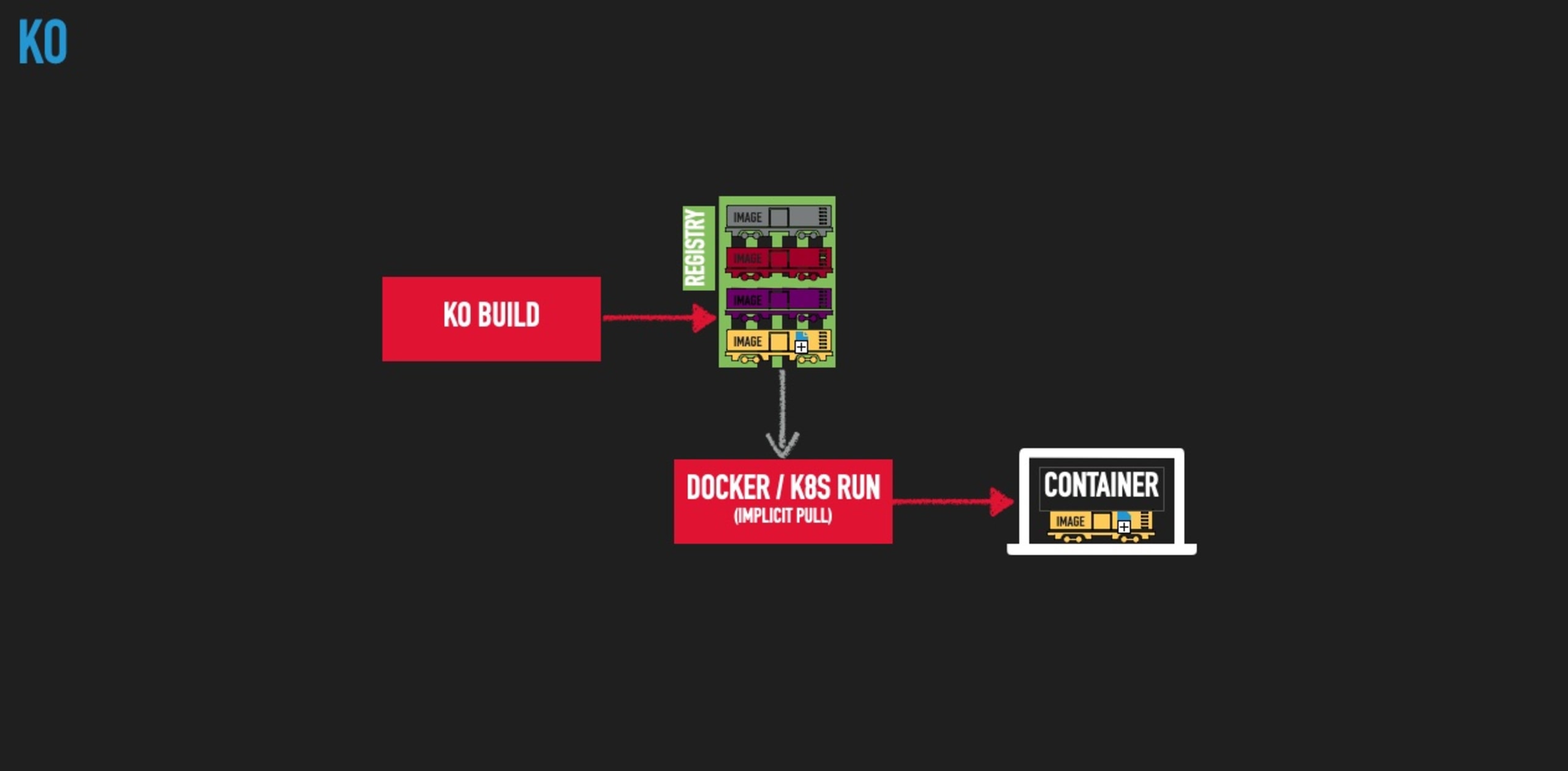

Hello, ko!Visualizing the Ko pipeline might look something like this:

By letting Ko do the heavy lifting of image authoring, creation, and pushing, we reduced the number of steps to perform, the dependence on the container runtime engine at build time, and the expertise and maintenance requirements that the Dockerfile required.

Smart defaults, deterministic specializations

Ko users benefit from the collective definition of best practices by the open source community, but what if your organization has custom standards that don’t comply? This is where Ko’s command line options and/or ko.yaml configuration file comes in handy.

Custom base image

Let’s say, for example, that your company has a specific image from which all Go applications need to use as their base. Simply include defaultBaseImage: line in a ko.yaml file in the top level directory with that image as it’s value.

defaultBaseImage: repo.mycorp.com/myteam/corp-approved-scratch:220923

Go compiler flags

To explicitly specify the go compiler -ldflags as we had in our original example, simply add a builds: entry to the same ko.yaml file with appropriate settings as specified in the Ko documentation.

builds:

- id: hello

dir: .

main: hello.go

env:

- GOOS=linux

- CGO_ENABLED=0

ldflags:

- -extldflags "-static"

- -linkmode external

Docker labels

Docker labels — a.k.a. OCI annotations — are a way to apply metadata to an image that is useful for referencing information such as the source repository it came from, CI build that created it, or any other data your team wants to embed. For more about image labels and when you might want to use them, check my blog on How and when to use Docker Labels.

Ko supports adding labels via its command line --image-label flag:

$ ko build hello.go --image-label foo=bar -L –image-label org.opencontainers.image.source=https://repo.mycorp.com/superteam/hello

…

ko.local/hello.go-7a204cfb24536a350234d9132276cae7:acf1dce794131205d488ed8fe4818866ebf509a61f4cf60aa07463e2b054d97d

$ docker image inspect ko.local/hello.go-7a204cfb24536a350234d9132276cae7:latest --format '{{json .Config.Labels}}'

{"foo":"bar","org.opencontainers.image.source":"https://repo.mycorp.com/superteam/hello"}

Note: As of the publishing of this article, there is no way to specify image labels from the .ko.yaml configuration file but there is an open issue for adding that functionality.

Other interesting Ko features

Image SBOMs

As we saw above, Ko will automatically generate and push an SBOM for your new container image. By default, it will be in SPDX format, but CycloneDX is also available by passing the flag --sbom=cyclonedx on the command line. You can also turn this feature off by passing --sbom=none; these and other configuration details are in the Ko documentation.

A full discussion of SBOMs and their role in creating a secure supply chain is out of the scope of this article but if you’d like to learn more, check out Building SBOMs for open source supply chain security.

Kubernetes integration

If your image needs to be tested in the context of a Kubernetes cluster, you undoubtedly have run into the hassle of needing to manage a recurring cycle of steps like:

Build my image

Deploy it to my registry (or otherwise load it into the Kubernetes cluster)

Update my deployment YAML with the new image tag

Redeploy via

kubectl

Ko can make this much easier by automating all of these steps into a single command: ko apply. With a simple change to your deployment YAML, replacing the image tag with a specially formatted ko:// one, ko apply will automatically perform all of the above steps for you, deploying to whatever cluster you have actively set in your kubectl config context.

For example, let’s imagine you have a sandbox or locally running Kubernetes cluster and need to iteratively deploy and test your work as you go. Here is a deployment manifest for our “hello” application to deploy there:

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-server

spec:

selector:

matchLabels:

run: hello-server

replicas: 2

template:

metadata:

labels:

run: hello-server

spec:

containers:

- name: hello-server

imagePullPolicy: IfNotPresent

image: ko://github.com/myteam/hello

ports:

- containerPort: 8080

name: httpYou can see that the image: line which contains ko://, followed by the path to the Go module of our application in GitHub.

Now, we simply run ko apply and give it the YAML file in an -f flag like we would with kubectl:

$ ko apply -f myfile.yaml

2022/09/27 14:35:51 Using base gcr.io/distroless/static:nonroot@sha256:2a9e2b4fa771d31fe3346a873be845bfc2159695b9f90ca08e950497006ccc2e for github.com/myteam/hello

2022/09/27 14:35:51 Building github.myteam/hello for linux/amd64

…

2022/09/27 14:35:56 Published myteam/golang-ea0a77f5cbe6ba6aea599ad83048ae7b@sha256:bd7eb1052cf5b8664890f23683930f07423aba0089150bb818a53e76ef2ebf68

deployment.apps/hello-server created

$ k get pods

NAME READY STATUS RESTARTS AGE

pod/hello-server-5b5cc95db4-gc9rn 1/1 Running 0 10s

pod/hello-server-5b5cc95db4-rhwnp 1/1 Running 0 10sWe now can test our application, make a change and re-run ko apply -f myfile.yaml as many times as we need and it will do all the heavy lifting for you!

As you would expect, there are other Kubernetes commands that you likely would need, such as:

deletefor removing your deployments, andresolvefor generating YAML that you can feed intokubectlor other tools.

See https://github.com/ko-build/ko#kubernetes-integration for full documentation details.

Challenges to using Ko

We’ve talked about all of the reasons why you might want to take the plunge and start using Ko in your workflows, but before you start, let’s talk about some of the challenges that you may face using Ko.

Cross-platform concerns

If you develop on a non-Linux based machine, the lack of a container runtime can, in some ways, make things a little harder. Having a container based build image allows you to encapsulate both the build tools as well as the OS and its libraries into a common base build image and effectively ignore the fact that your workstation platform may not match that of your production environment.

Case in point: The Go+Docker part of the example above will work on just about any platform because, regardless of where you build it, the golang base image provides the necessary Debian based, glibc libraries available for the Go builder to create the static binary. If, however, you try to run the Ko example with the same ldflags options on a MacOS machine, for example, you’ll get errors about missing libraries needed to do the static bindings.

This is because MacOS/Darwin does not include the necessary libraries to do the cross-complication for a Linux static binary (at least not at the time I am writing this). Not to pick on Apple too much, similar issues can come up for disparate CPU architectures with Linux and Windows WLS workstations too.

Skillset atrophy

The skills and knowledge leveraged to create and maintain well crafted container images is a valuable commodity and using tools which abstract or remove the need to have them can reduce the ability to deal with issues if something fails. In fact, the fewer the number of people on a team that understand container technologies, the greater the dependence is on those people with the needed experience when it comes to troubleshooting and maintenance at runtime. In extreme cases, this can lead to burnout, attrition, and/or outages.

Security complacency

If the process of container image construction becomes completely automated away from the developers, compliance with processes like image vulnerability scanning may falter. Vulnerabilities in un-updated images, packages, libraries, etc. can creep in exposing your application to attack so make sure such scanning is still being done via other automated means such as:

Build scriptings or Makefiles

Git hooks

CI build steps

If you are not already doing image scanning, Snyk offers free container image scanning that finds vulnerabilities in images and provides simple, actionable remediation advice.

Simplifying container images

Ko — and other alternative image building tools — can greatly simplify your Go application container image construction tasks and help streamline and standardize the way your teams build images. One of the greatest benefits is to your CI tooling as you no longer need to expose a container runtime to your build environment, greatly reducing privileged access attack vectors in your SDLC. Regardless of whether you choose to use a build tool like Ko, be sure your teams are well versed in container technologies and the security ramifications of any processes and tools they use to build their images.

脆弱性の自動検出および修正

Snyk は、コード、依存関係、コンテナ、およびクラウドインフラのワンクリック修正 PR と対策アドバイスを提供します。